Last week, I presented a model of misinformation that draws heavily on insights from values analysis. The Care value (i.e. causing pain is wrong, especially to the vulnerable) has become the basis of most polite debate, as commenters try to outline why their chosen policies create more tangible benefit (e.g. economic growth, lives saved, etc.). Many people would argue that the prominence of the Care value is a positive development, because it ensures that public policy is designed to bring the most happiness to the greatest number of people.

However, such an approach has pushed other moral arguments to the margins, even if they remain salient to people’s decision-making. For example, it is no longer politically correct to claim that your country is simply better than others’, a viewpoint that draws heavily on the Loyalty value (i.e. people have special moral responsibilities to in-group members). That’s unlikely to be a winning argument in public, polite debate.

That being said, it is common for people to believe that their country is special, and this view drives many people’s policy preferences on various topics, including immigration. Since this perspective can’t be expressed publicly (i.e. it is ‘impolite’), in order to engage in debates about the optimal number of immigrants, people who rely on the Loyalty value need to manufacture arguments that are more palatable in the public forum.

These arguments often rely on fabricated or misinterpreted information. For example, a common argument in the U.S. immigration debate is that undocumented/illegal/irregular migrants are dangerous. They’re not, but that’s not necessarily important. Immigrants are often treated with suspicion because they are ‘outsiders’ – and intend to benefit from a system designed for ‘insiders’ – a reaction that is rooted in our moral intuitions. It’s the same evolutionary hardware that makes us trust our families more than strangers (and to warn our children against even speaking to strangers in public places). The moral reaction drives the viewpoint, and the misinformation only serves to justify the reaction to other people.

I don’t want to pretend like this model of fake news is a slam dunk. There is lots of work that needs to be done before I can confidently say that this is true. However, it is a model that is worth exploring. If true, it would have several important implications for policy development:

Put fewer resources into fact-checking.

Fact-checking is a popular response to misinformation. The logic of fact-checking is eminently rational and often incorrect: people hold indefensible beliefs (the Earth is flat, vaccines cause autism, etc.) because they read misinformation. Consequently, a logical method for reducing the prevalence of these beliefs is to present credible alternative evidence and place warnings on dubious articles (à la Facebook and Twitter). No misinformation means no harmful belief. Problem solved!

Notice the direction of causation under this model. The misinformation produces the belief. In contrast, a values-based understanding of misinformation relies on a different direction of causation. The belief comes first, and it creates a market for misinformation, one that enterprising writers and conspiracy theorists are very willing to supply. Under this model, debunking efforts resemble a Sisyphean game of wack-a-mole: a new piece of misinformation is produced to meet existing demand, it gains traction because it is credible enough to be useful, information gatekeepers debunk it (not an easy task), and new misinformation is then fabricated to refill the niche. This would be a never-ending cycle of work, and, more importantly, relatively few people’s underlying beliefs would be changed by it.

I don’t mean to suggest that fact-checking should stop. Although many beliefs may derive from moral intuitions, misinformation helps shape those intuitions into a concrete view. For example, the Sanctity value (i.e. certain actions are inherently dirty and polluting) may encourage vaccine hesitancy, because injecting artificial substances into people can be interpreted as the corruption of the pure human body. However, this intuition doesn’t necessarily mean that people will believe that the COVID-19 vaccine contains microchips. Some other people may instead conclude that vaccination is fine but only with an additional procedure (e.g. eating fats, bathing with baking soda) to purge the body of the impure elements. Both are plausible responses to the moral concerns about vaccination, and not all false beliefs are equally harmful. It would be preferable for people to be vaccinated and “purge” their body rather than avoid vaccination altogether. Efforts focused on the most harmful beliefs won’t end misinformation, but it could encourage more benign beliefs to rise to the top.

As well, debunking misinformation sometimes does alter people’s beliefs. As demonstrated in this article, it’s possible for your rational mind to override your intuitions. However, this experience is extremely uncomfortable, so most people avoid it. Even so, investments in fact-checking may cause some people to reevaluate their beliefs, which is a positive development.

In addition, some misinformation is so damaging that government has no choice but to act forcefully to correct it. For example, Q-Anon, the bizarre conspiracy theory that the U.S. is run by a cabal of pedophiles, requires a concerted response. Dedication to this cause has become cultlike, and believers played central roles in the January 6 sack of the U.S. Capitol. Q-Anon is considerably more harmful than other conspiracy theories (e.g. the moon landing was faked, the CIA killed JFK), because it has clearly incited real violence and organized disparate groups into a single cause. Consequently, an effort to correct the “facts” that underpin the theory is needed, even if we can expect that the effectiveness of such efforts would be limited. Even deradicalizing a few thousand Q-Anon believers would be worth the effort.

Despite these benefits, it is important to be pragmatic about the effectiveness of fact-checking. At most, only a relatively small proportion of the consumers of misinformation will be swayed by Snopes. Scarce resources should be devoted to other strategies of combatting misinformation alongside fact-checking.

Pursue active, morally responsive communication strategies when fact-correcting is necessary.

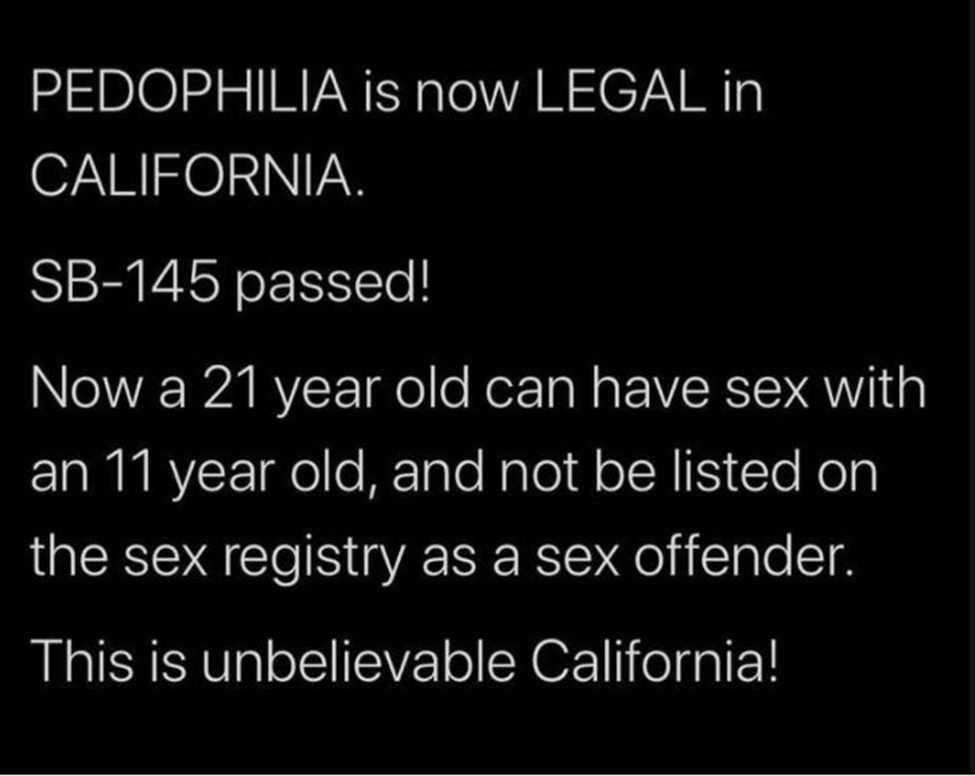

Values analysis can help hone communications strategies to better resonate with the moral compasses of the audience, by aiming for the gut rather than the head. For example, misinformation abounded when Bill SB-145 passed in California. The Bill equalized the treatment of individuals who engage in vaginal sex with minors and those who engage in other kinds of sexual relations with minors. Under the old rules, an 18-year-old who has vaginal sex with a 17-year-old may be put on the sex offender’s list, while an 18-year-old who has anal or oral sex with a 17-year-old must be put on the sex offender’s list (homosexual acts being more likely to fall into this latter category). Bill SB-145 equalized the legal treatment of these sexual acts, giving judges the necessary discretion to determine if individuals convicted under these laws should be put on the sex offenders list or not, regardless of the specific act that was performed. Regardless of your views on crime and punishment, this is a reasonable legal reform at least worthy of consideration.

However, the actual contents of the Bill were overtaken by the outrage. Q-Anon believers claimed that the Bill “legalized pedophilia”, and the issue blew up on social media. The Bill appeared to weaken the reach of the sex offenders list – and in some ways, it did – which strongly violated the Care and the Sanctity value and led to a fierce moral reaction.

Once the Internet focused in on the issue, the myth-busting began, as politicians attempted to more explicitly outline what the Bill did. SB-145 sponsor State Senator Scott Weiner set up a fact-checking page, which clearly outlined the contents of the Bill and “debunked” some of Q-Anon’s charges. This is helpful, but it’s missing a key element: this page does not make an explicit moral argument in favour of the Bill. Rather, it focuses on the technical aspects of the sex offenders’ registry and how SB-145 would improve matters. This will not resonate with many people’s moral compasses. It comes across as cold, calculating, and confusing. No wonder that misinformation about SB-145 was viewed by millions of people.

These fact-checking efforts should have been complemented by a strong moral argument in favour of the legislation. Fundamentally, the legislation was about fairness, as similar sexual acts were receiving different punishments. This is an unfair double standard. While Senator Weiner’s fact-checking page attempts to correct misconceptions about the Bill, it does not use the word “fair”. While technically correct, the page does not make a clear effort to be morally responsive. It aims for the head, not the gut.

Furthermore, the negative reaction to the Bill was predictable. It’s not surprising that modifying the sex offenders’ list would create a major moral reaction, especially at a time when Q-Anon acolytes are preaching that the government of the U.S. is filled with pedophiles. But fact-checking is inherently reactive. Peddlers of misinformation have the initiative, and the fact-checkers can only respond after damage has already been done.

Thankfully, values analysis allows policymakers to predict moral reactions and adapt communications strategies to them. Here is the press release that accompanied the Bill. It opens with a long, winding, and frankly confusing explanation of what the Bill does. Only at the end of the document are moral concerns raised: Senator Wiener is quoted as saying that the old law “ruined people’s lives and made it harder for them to get jobs, secure housing, and live productive lives. It is time we update these laws and treat everyone equally.” These are strong moral arguments; why are they hiding at the end of the press release?

This is not morally responsive communication. The content of the Bill speaks to your rational mind. The reason for the Bill speaks to your moral compass. Communication dealing with morally controversial policy needs to resonate with people’s values. The first piece of information in this press release should be a clear statement of the unfairness of the existing laws and a declaration that this legislation would fix it. Then, short social media posts publicizing this view should have been shared immediately after the Bill was introduced. If the moral reaction is predictable, it should be addressed in advance, before misinformation has the chance to spread.

Create morally responsive policies to limit declines in trust

Beyond fact-checking, lots of ink is being spilled about an ongoing decline in public trust, which has been directly linked to the increasing prevalence of misinformation. Low levels of trust could interact with misinformation in two possible ways. First, people could read fake news, believe salacious claims about their governments, and lose trust in public institutions. In other words, the misinformation causes distrust.

This is possible. One study found that misinformation has a significant effect on trust in institutions, although consuming misinformation can increase trust, depending on the political views of the consumer and the party in power. Regardless, under this model, solutions to misinformation include fact-checking, altering search algorithms, and reducing article sharing on social media.

However, it is also possible that the interaction between trust and misinformation works in the opposite direction. People have low trust in the media and in political institutions for other reasons, which prompts them toseek out alternative sources of information. The low trust creates a market for misinformation, which alternative sources are happy to provide.

There is some evidence to support this explanation. The U.S., which hosts perhaps the most well-developed market for misinformation, was experiencing declining levels of trust long before social media made misinformation so widely accessible. As far back as 1994, only one in five Americans trusted the federal government to do the right thing. No wonder that conspiracy theories spread so widely in such a low-trust environment. Under this model, the solution is not primarily to debunk fake news. Rather, it is to increase trust, which will reduce demand for misinformation.

I argue that morally responsive policy development and communication stand to serve this goal. If citizens believe that their governments are pursuing immoral policies, then their trust in government is likely to decrease. In fact, there is some solid research to back this up: a 2018 OECD study suggests that government values have a significant impact on public trust in institutions. However, much more research needs to be done on the relationship between trust and misinformation before the direction of causality can be fully established (either misinformation reducing trust, or low trust enabling misinformation).

Conclusion

Will this approach end misinformation? Of course not. There will always be conspiracy theorists, and social media is unlikely ever to be a good place to get accurate information or balanced debate. However, the goal shouldn’t be to end all misinformation. This is about harm reduction. The fewer people who consume misinformation, the better. And in achieving this objective, values analysis shows promise.